CPM SDK for Next.js

Modular prompts. One interface. Full control. A lightweight SDK to build AI-powered workflows in your Next.js apps — with modular prompts, unified API routing and zero backend config.

Install in under a minute

Three steps: add the SDK, link to BrinPage Cloud, and launch the local dashboard.

Add the SDK

# Install

npm install @brinpage/cpm

Link to BrinPage Cloud (.env)

BRINPAGE_API_KEY=your-cloud-key

BRINPAGE_BASE_URL=https://cloud.brinpage.com

No provider keys in your project. Requests are routed via BrinPage Cloud.

Run the dashboard

npx brinpage cpm

# runs on http://localhost:3027

Minimal setup for Next.js (App Router). All configuration—providers, models, temperature, max tokens, embeddings—is managed in the CPM Dashboard and synced via BrinPage Cloud. Your code just initializes the client and calls cpm.chat() or cpm.ask().

Want the full setup, tips, and troubleshooting? Visit the CPM Installation guide.

Open Installation GuideConnectConnectonce.once.BuildBuildanywhere.anywhere.

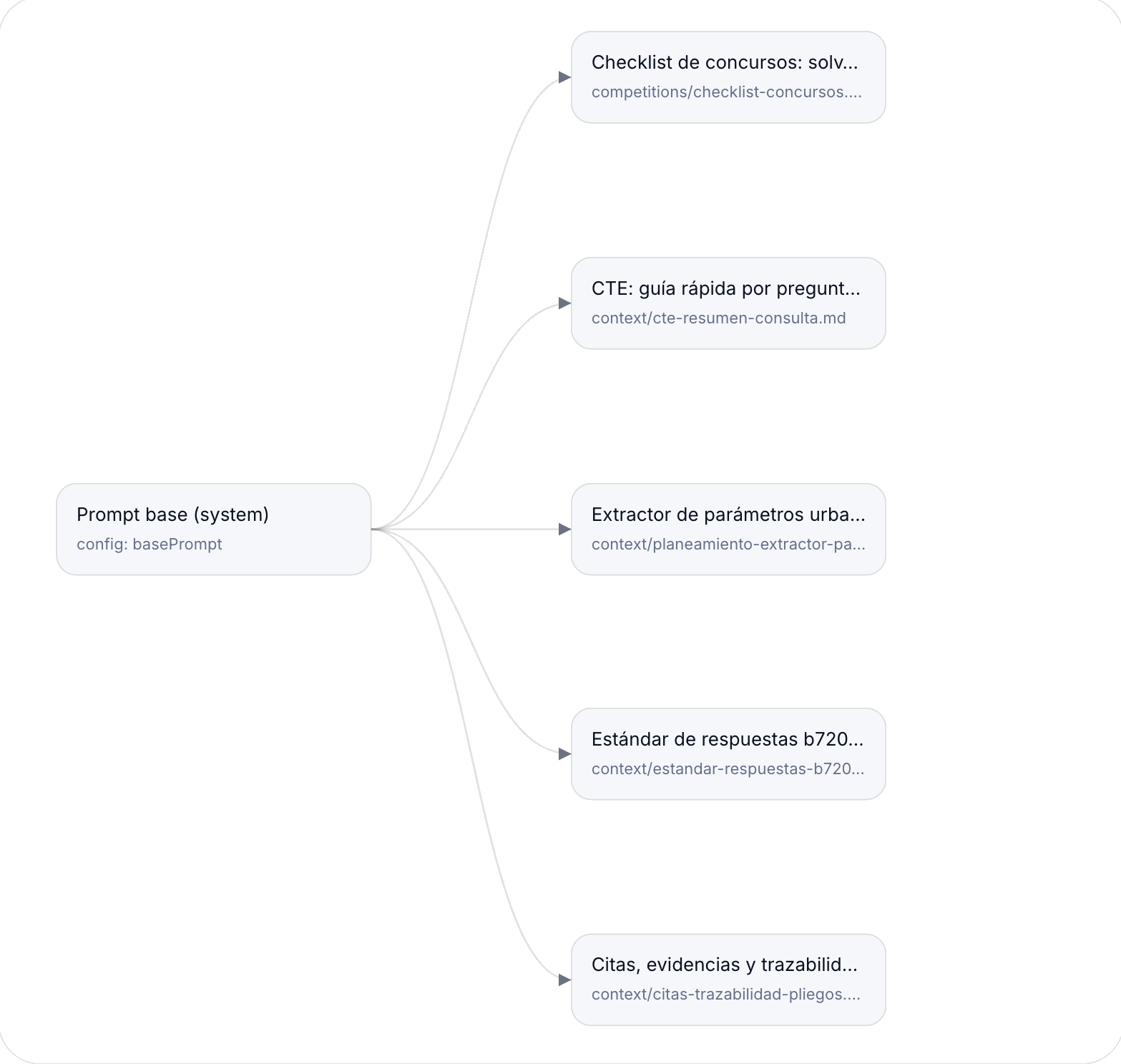

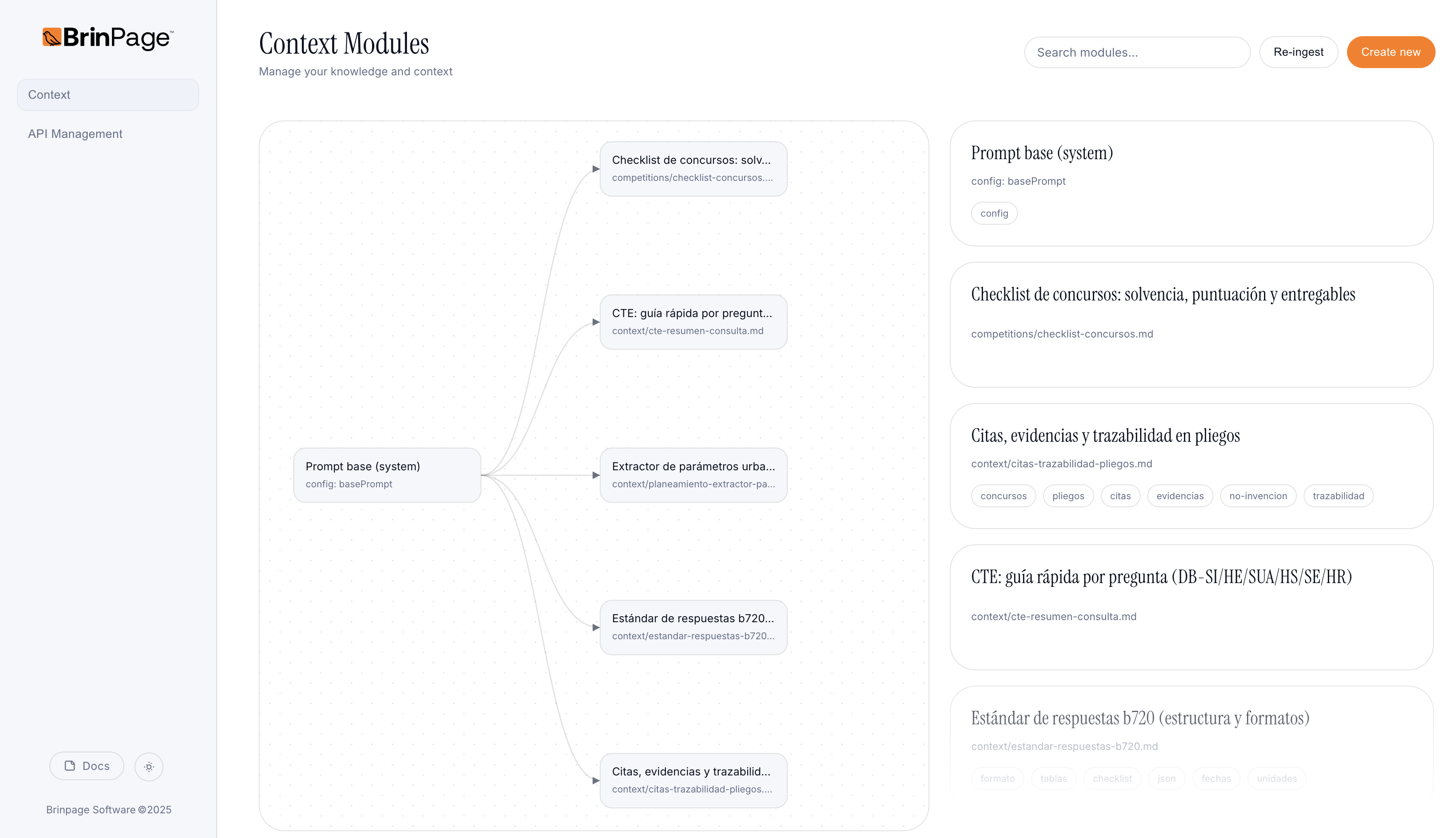

Modular knowledge for zero guesswork.

CPM treats prompts as components: a Base + stackable Modules. Declare what your task needs; CPM builds the final prompt deterministically—the same inputs always produce the same output prompt.

- • No more giant strings or copy-paste drift.

- • Attach only the modules a task actually needs.

- • Smaller, focused prompts → fewer tokens, clearer model behavior.

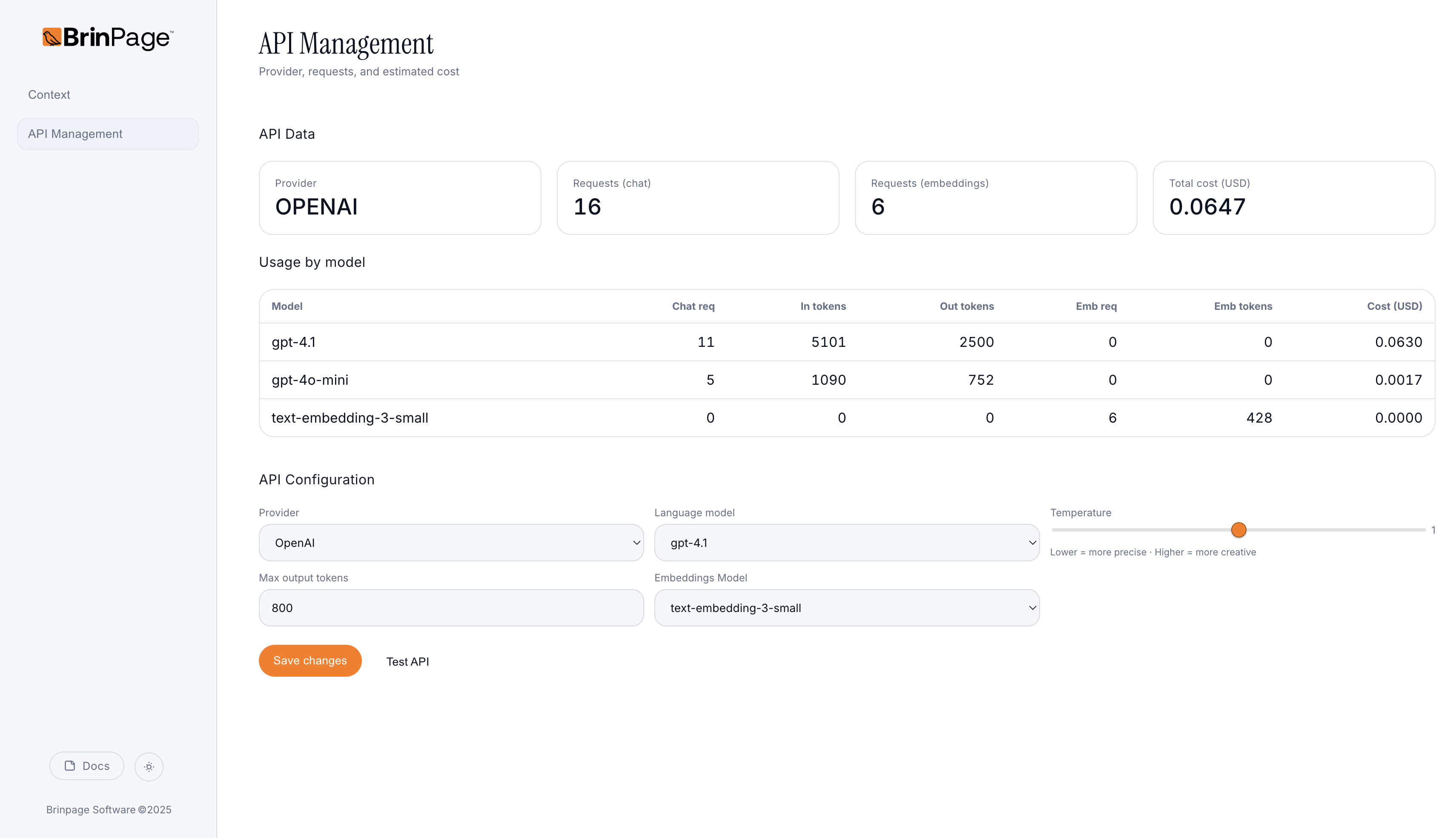

Your control room on localhost.

Run the dashboard on :3027. Switch models, tune parameters, and inspect every request — all connected through BrinPage Cloud. No provider setup. No lock-in.

- • Unified routing — OpenAI ↔︎ Gemini (more soon), managed via BrinPage Cloud.

- • Tuning from the UI — model, temperature, and caps without touching code.

- • Request inspector — diff modules and check the final JSON payload before execution.

Efficiency by design.

Less repeated context. Smarter routing. Fewer retries thanks to preflight validation.

Cost ≈ (prompt + response) × retries. CPM minimizes both.